Based on my experience attending multiple interviews, here I’m sharing web scraping interview questions and answers.

I’m sure going through this list will help you in your job preparation. It will also give you a fair understanding to deal with any interview questions related to web scraping.

Let’s begin with the basics…

Table of Contents

Web scraping is the technique to extract and read the data from the internet. The collected data can be saved and reused for data analytics.

There are multiple steps involved in web scraping:

Scraped data is very useful in data analytics.

Python is the most preferred programming language for web scrapping. It has many libraries to read and extract data from the internet, to parse and manipulate the data.

The data on the internet we access through the browser is in the HTML and CSS format. For extracting data from web pages, a basic understanding of HTML tags and CSS is required.

For storying data, JSON, XML, YAML formatting languages can be used. Read difference between JSON, XML, and YAML.

(Give any examples you worked on. If you don’t have any experience, I would suggest to write a simple web scraping tool to extract the data. Here, I’m explaining web scrapping tool I worked on to extract the data from Zomato- food and restaurant aggregator application)

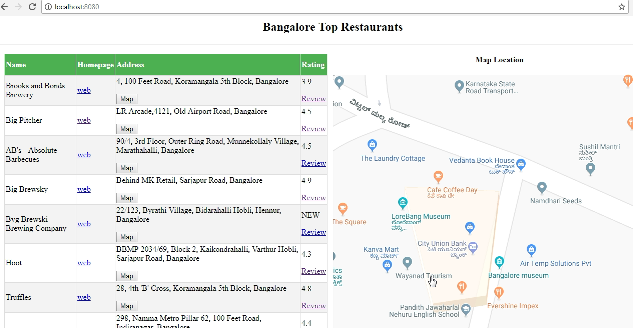

Here is a screenshot of the Zomato web scraping tool to extract the top 10 restaurants in India. I used the Python bottle framework to display the web scrapped data on the web pages.

Also reading the geographical location of each restaurant to display it on the Google map.

Here are web scraped data restaurant review data from Zomato.

Note: You pick any examples and explain how you do it. You don’t need to write complete code in an interview, but you have to explain the complete procedure and steps you followed. The interviewer can ask you many questions to test your knowledge. When I attended the interview, the interviewer asked me to explain it on the whiteboard.

There are many Python libraries are available for web scrapping like…

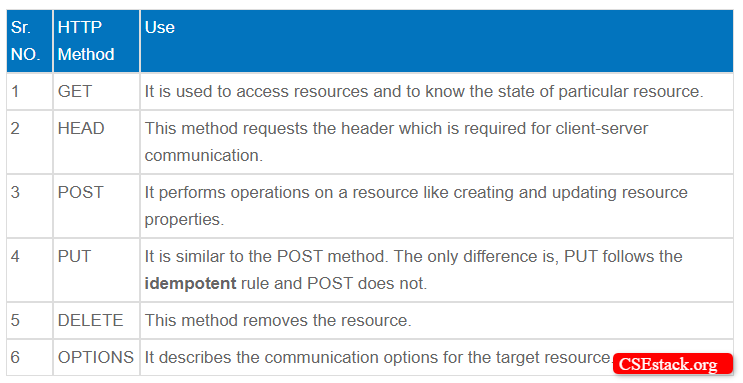

The request module is used to read the data from the internet web pages. You have to pass the URL from where you want to read the data along with the HTTP request method, header information like encoding method, response data format, and session cookies…

In the HTTP response, you get data from the website. Data can be in any format like string, JSON, XML and YAML; based on data format mentioned in the request and server response.

When you send the HTTP request to read the data from the internet, you get the response along with the different response status.

Every status code has its meaning.

If you are accessing any website more than a certain threshold, your IP address can be blocked by the website. Proxy IPs/servers can be used to access the web pages if your IP address is blocked.

Usually, data analytics companies web scraps millions of web pages. Many times their IP addresses get blocked. To overcome this they use a VPN (Virtual Private Network). There are many VPN service providers.

If you are not aware of VPN, here is how it works in laymen’s terms.

How does VPN work?

You send a request to the VPN server. It reads the data from the website. VPN sends back the response to your IP address.

You can see, VPN actually hides your IP address from the website server and they will never come to know about your IP address. VPN has a pool of IP addresses. Even if the VPN IP address gets blocked, they can use another IP address from the pool.

Conclusion:

These are all the most common web scraping interview questions and answers. If you have any further questions or doubts to ask me, write in the comment section. I will keep updating this list adding more questions.

I’m a Pythion developer and looking for a job. This is really nice tutorial. Please keep adding similar posts. All explanations are lucid and laconic.

Thanks, Vadin. If you are looking for a job, check other job-related articles. I hope will help you. Best wishes!

Thank you. This article is very helpful for me and looking for more articles related to Python.

You’re welcome, Ankita! You can read my complete Python tutorial.

I was recently asked about the POST method. How and what is the use of POST in web scraping?

Hi Atul, POST is an HTTP method just like GET, PUT… If you scrap webpage source code, you can find this tag in the HTML code.

Hi Aniruddha, above 4th question is how you get the information and how to write code in VBA for the above information(Zomato food). Please explain it is useful for me.

Please reply…

Hi Bala, I don’t have experience working on VBA. But surely, I will share detail about the Python script I have written for Zomato web scrapping in one of the coming tutorials.

Hie. I’m Tendai Mtiti. I’m studying web scrapping for the first time and I have seen that this kind of information is so helpful. Please keep on posting the information. Thank youuu.

Hi Tendai. I’m glad you find it useful for your learning. This keeps us motivated to share more. 🙂 Best wishes!

How I can scrape the website which contains login ID and captcha(image). Without using selenium

If its your own website that you want to scrap, you can enable API to login and access web pages. If it’s 3rd party website, it is quite difficult without selenium.